EISM

EISM’s MatrixTM platform represents the world’s first attempt to link the latest advances in physics, math, and artificial intelligence to the practical, everyday science of resource management, decision theory, and governance.

The word “Matrix” (Latin for “womb”) captures the uniquely transformative nature of the platform and its underlying technology. On this page, we will draw an initial conceptual sketch of the platform. We will continue to develop the idea over time and, with the help of our partners and your feedback, we will make more advanced versions available soon. In the meantime, we are grateful for your visit and welcome you to the MatrixTM conceptual framework.

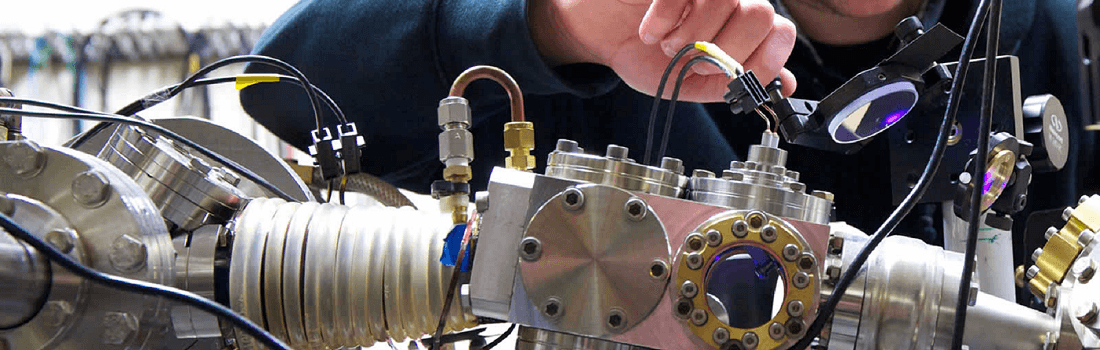

Matrix Mathematics

In mathematics, a matrix is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns. For example, the dimension of the matrix below is 2 × 3 because there are two rows and three columns:

Rows and columns arrayed in this manner can be added, subtracted, multiplied, etc. This kind of matrix math is at the heart of linear algebra, which in turn is at the heart of machine learning, artificial intelligence, and even quantum AI.

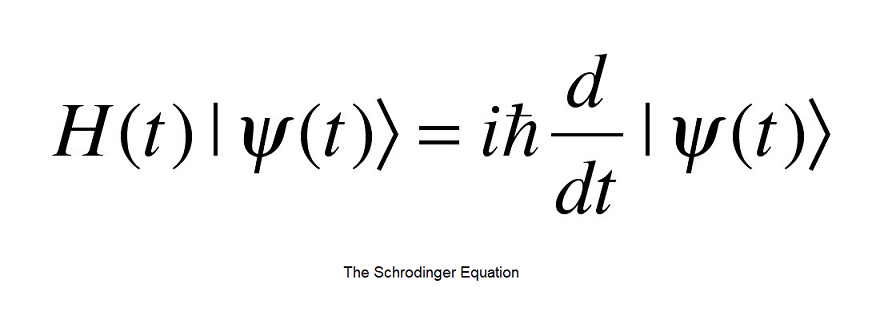

The key connection to make here takes us back to the early 20th century and the discovery of quantum mechanics, when scientists like Albert Einstein, Werner Heisenberg, and Erwin Schrodinger formulated various theories to account for the strange experimental behavior that was being discovered at the sub-atomic level. Heisenberg was the first to formulate a mathematical formalism to describe quantum mechanics, and he did so using a matrix formulation. Heisenberg’s formulation was easy enough for the simplest of atomic equations, but quickly became unwieldy and complicated, to the point that many scientists refused to follow.

At this point, Erwin Schrodinger devised the now famous Schrodinger equation, which in turn gave rise to the predominant interpretation of quantum mechanics known today, wherein a sub-atomic particle can be in several places at the same time, known as being in a “superposition”. In contrast to the difficult and unwieldy math of Heisenberg’s formulation, Schrodinger’s equation was relatively simple. The difficult part was interpreting what it means to say that a particle is in two places at the same time. That difficulty remains to this day as one of the most perplexing aspects in our understanding of nature.

Fast-forward to the latter part of the 20th century and to the work of a number of physicists, primary among them the work of award-winning Israeli physicist Yakir Aharanov. Parting from the matrix formulation of Heisenberg, Aharanov has devised what is known as the “two-state vector formalism”, according to which the mysterious effects of quantum mechanics are explained not by the evolution of one state vector that comes from the past to the present, but rather from two state vectors that, in some sense, “collapse” in the present. One of these vectors comes to the present from the past, and the other comes to the present from the future. The present, in this Aharanovian sense, is caused by both the past and the future. Unlike any other of the various competing interpretations of quantum mechanics, Aharanov’s breakthrough has had direct experimental implications for many other areas of physics and math, among them, for example, in the science of amplification. We will have a lot more to say about this in the future.

For now, the important conceptual connection to make is the connection between the “two-time” physics described so rigorously and precisely by Aharanov and the latest advances in deep learning, where back-propagation and various other algorithms and approaches are anchored in the idea that the future has an impact on the present. In line with Aharanov’s line of work, these developments in deep learning and AI in general, including the latest developments (and limitations) in the quantum realm, promise to transform the way we think about and work with nature.

All of this is relevant because at the cutting edge of both computer science and physics, the world is witnessing a growing technical and philosophical convergence – and EISM is excited about being at the forefront of the convergence.

Top-Down AI

Broadly speaking, there are two approaches to the study of nature. Nature can be studied from the bottom up, which is to say from the concrete fundamentals to increasingly more abstract and syncretic ideas, or from top down, which is from the general ideas down to the concrete details. Our approach on this page will be top-down. Taking a top-down approach will afford us and our clients with several important advantages. These advantages can be summarized as the difference between approach a problem from the perspective of a child, versus approach the same problem from the perspective of an experienced adult. Other things equal, it is always preferable to approach a problem with the experience of an adult. That is what we aim to demonstrate in what follows and in our service to our customers in general.

What, then, are the benefits of a top-down approach to management in general and artificial intelligence in particular? We will try to answer that question in the next few paragraphs by explaining the connection between machine learning, physics and effective management. Effective management, after all, is the overriding goal of every machine learning initiative. If we are able to draw a valid connection between machine learning and physics, we would have a much more precise and scientific way to approach all of our management problems.

Let’s begin by walking through a high-level overview of deep learning processes as they are practiced today.

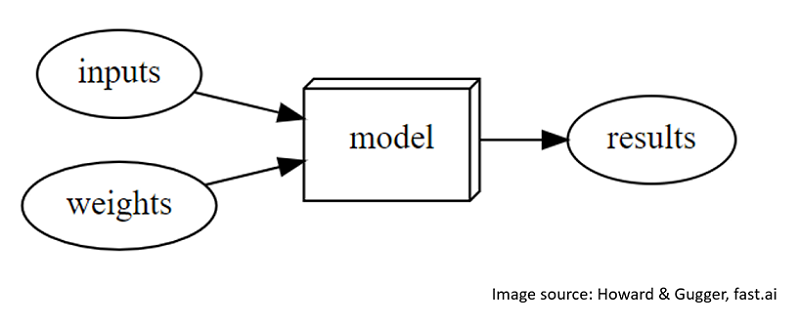

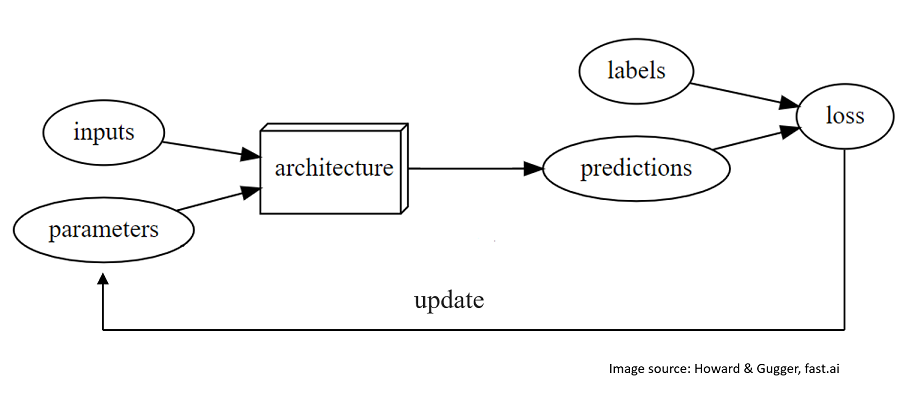

In the diagram above, we can see a very rudimentary picture of the basic machine learning process, which differs from a conventional computer program because inputs are “weighted” before being passed through the computer program/model. In the graph below, we see a more complete cycle through a modern supervised machine learning cycle, including the common terminology used.

The key question that we have posed in our research is whether we can apply these basic machine learning concepts to our social and political processes. In other words, what if instead of a “computer model” we thought of a city? And what if instead of inputs into the model, we thought of citizens? Lastly, if we could do that, could we also think about “inputs” as the various weights that we assign to those citizens as they pass through the city? What would be the results of such a model if we iterated through many times and changed the “weights” of persons depending on the model results?

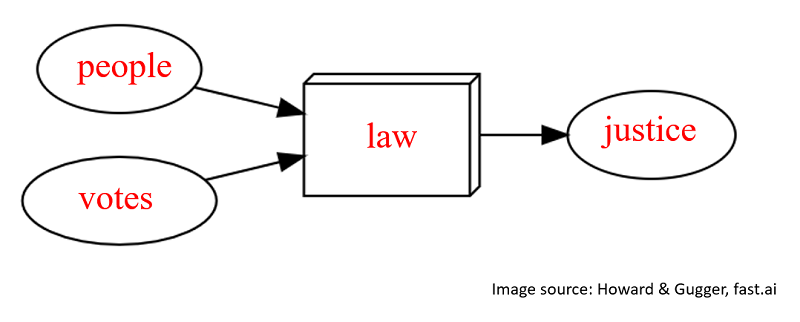

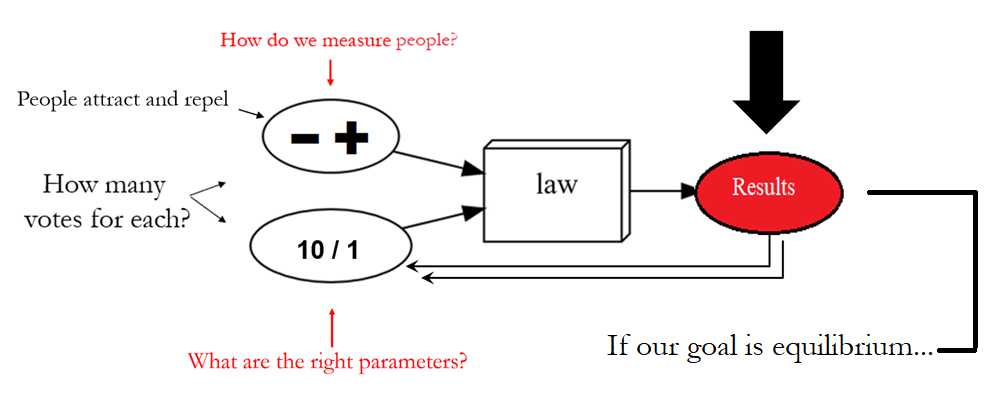

In the graph above, we see the substitutions we just mentioned, i.e., the model becomes law, inputs become people, weights becomes votes, and results are a measure of justice. If we do this, a natural question arises: What does machine learning tell us about the process?

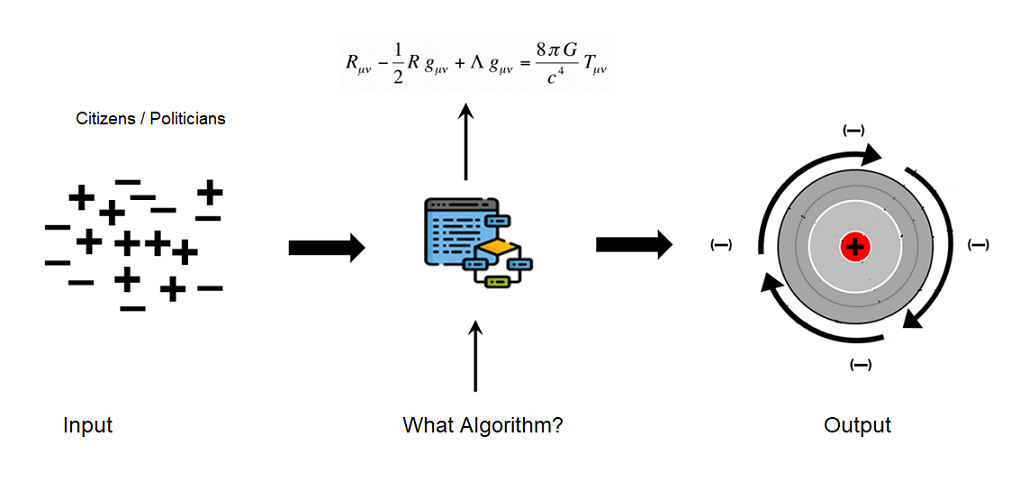

In a very interesting and fascinating turn of events, we tried run these ideas through a basic machine learning model. None of the results were very interesting until we tried to run simulation on an unsupervised machine learning model. To do so, we simply gave all human acts a value between -1 and +1, with -1 representing repulsion and +1 representing attraction. We then allowed our simulated humans to interact and ran them through the model. Much to our surprise, when we asked for an equilibrium between all of the attraction and all of the repulsion in a city, the mathematics that emerged from the model was very similar to Newton’s universal law of gravitation. On further experimentation, what emerged from the machine learning model was the Einstein field equations.

In hindsight, we should not have been so surprised that the Einstein field equations should emerge as a solution to our predicament because attraction and repulsion have always been and will always be two of the most fundamental forces in nature. The great part about this is that even though we don’t really know what these forces are (that is, we still do not understand gravity and dark energy), we certainly understand how they interact. They interact with the astounding precision of quantum mechanics and general relativity. This has important practical implications for everything that matters to humans, not the least of which is the effective management of the planet.

Practical Applications

At the beginning of the scientific revolution, in the preface to his Magnum Opus, Isaac Newton expressed regret for not being able to understand the forces of attraction and repulsion.

Several centuries later, we remain just as mystified by these two forces as Newton was in his day. However, thanks to the progress of science and thanks especially to the latest advances in machine learning, we have more clues than Newton did. The most important point to ponder here is that even if we have not arrived to a complete understanding of the forces of attraction and repulsion, we have at least come to understand how the extremely precise mathematics that governs them can be mapped to human interaction. That is a step in the right direction.

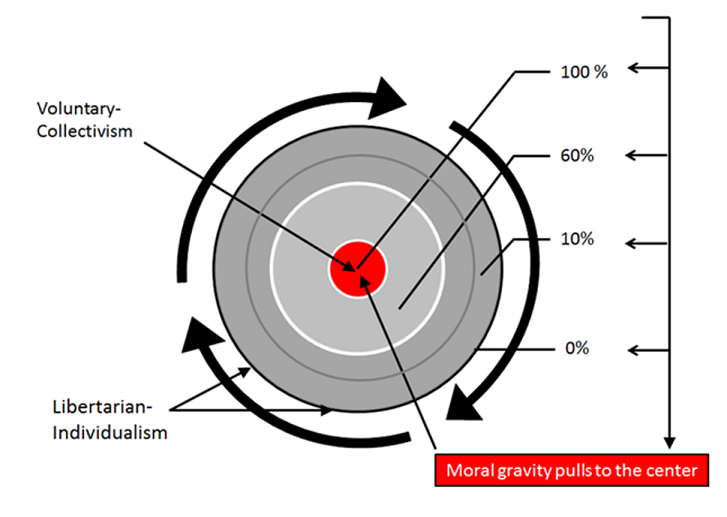

As we can see in the graph above, if in fact our goal is better management of our cities and organizations, what we need to do is understand the mathematics of general relativity and make our decisions according to that math by weighing the various inputs of citizens according to their true value.

If we do this correctly, what will emerge are cities, companies, nations, and a planet that is more closely aligned with the mathematics that governs order in nature.

We will have a lot more to say about this in the near future. For the moment, we simply wanted to make this basic outline available to you. We believe understanding this framework and approaching all lower-order management challenges in this top-down way will deliver unprecedented value to all humans, without exception.

If you would like to talk about the Matrix platform, please feel free to contact us via email or phone. We’d be happy to answer any questions or engage at any level that interests you.